Publication

ConceptDistil: Model-Agnostic Distillation of Concept Explanations

Published at ICLR 2022 - PAIR2Struct workshop

Abstract

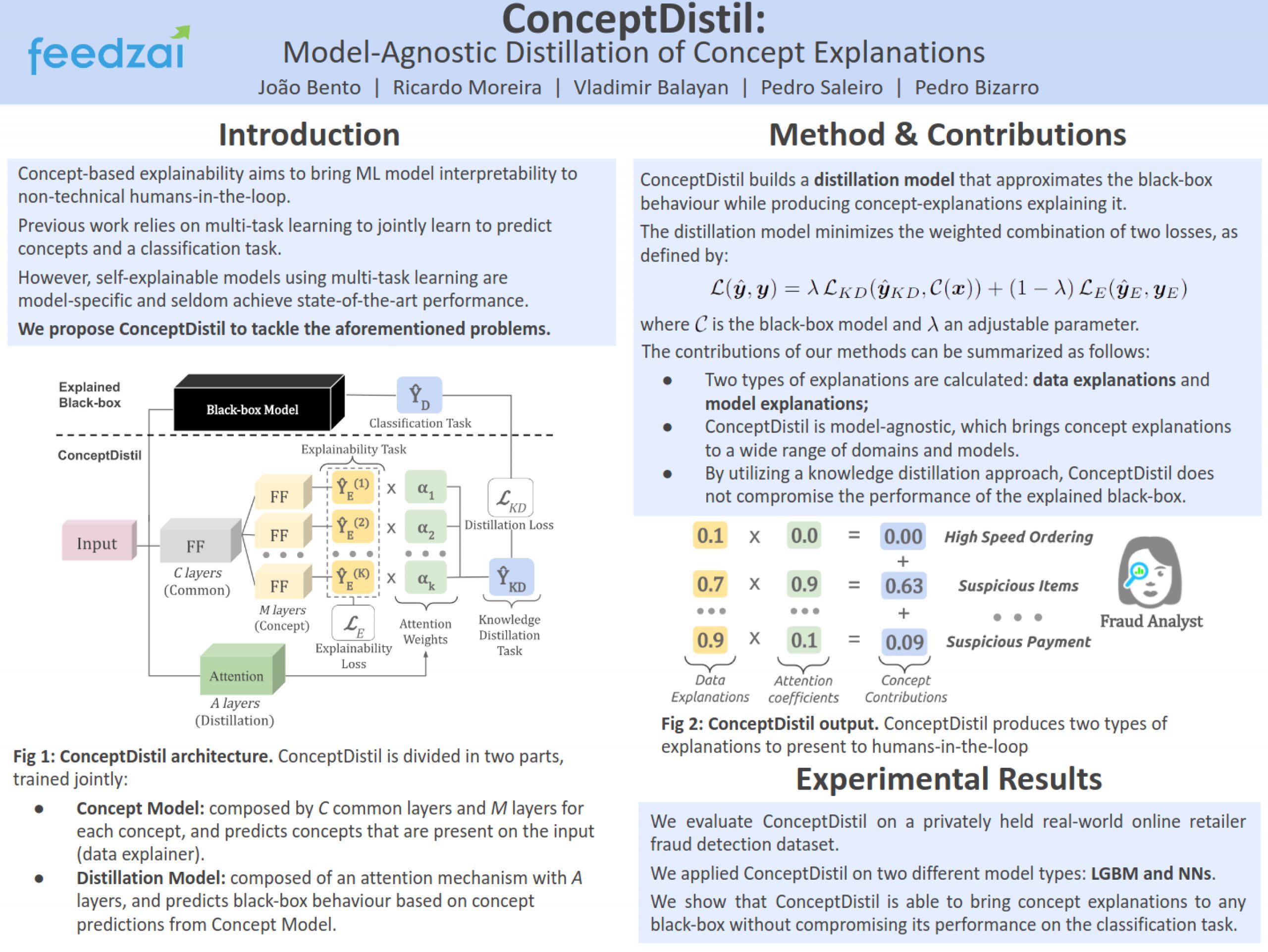

Concept-based explanations aims to fill the model interpretability gap for nontechnical humans-in-the-loop. Previous work has focused on providing concepts for specific models (e.g, neural networks) or data types (e.g., images), and by either trying to extract concepts from an already trained network or training self-explainable models through multi-task learning. In this work, we propose ConceptDistil, a method to bring concept explanations to any black-box classifier using knowledge distillation. ConceptDistil is decomposed into two components: (1) a concept model that predicts which domain concepts are present in a given instance, and (2) a distillation model that tries to mimic the predictions of a black-box model using the concept model predictions. We validate ConceptDistil in a real world use-case, showing that it is able to optimize both tasks, bringing concept-explainability to any black-box model.