Publication

Uncertainty-Aware Systems for Human-AI Collaboration

Published at Transactions on Machine Learning Research

Abstract

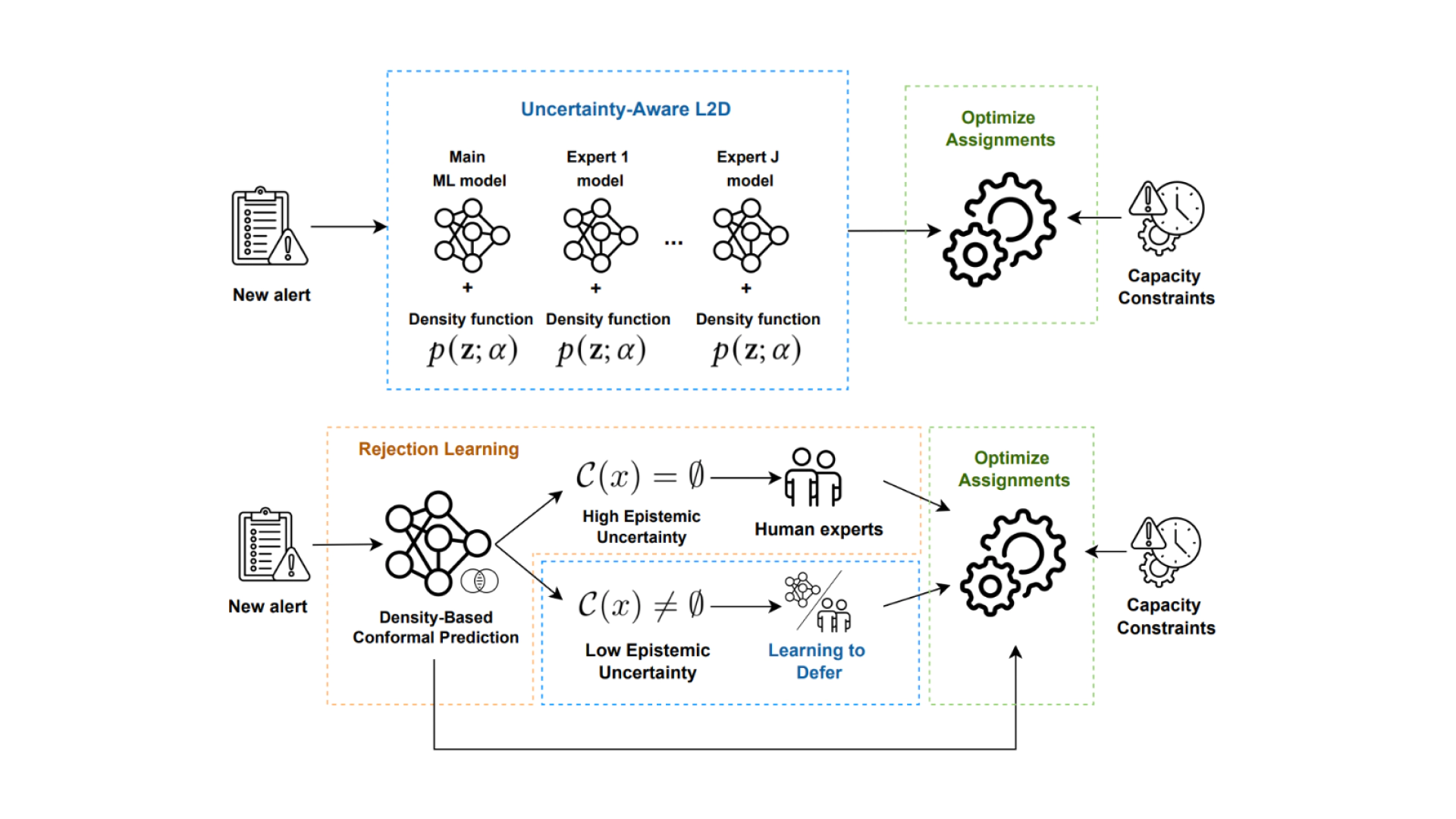

Learning to defer (L2D) algorithms improve human-AI collaboration (HAIC) by deferring decisions to human experts when they are more likely to be correct than the AI model. This framework hinges on machine learning (ML) models’ ability to assess their own certainty and that of human experts. L2D struggles in dynamic environments, where distribution shifts impair deferral. We argue that robust HAIC in dynamic environments requires uncertainty-driven policy switching rather than reliance on a single deferral strategy. To operationalize this principle, we introduce two uncertainty-aware approaches that estimate epistemic uncertainty to guide the deferral policy choice. Both methods are the first uncertainty-aware approaches for HAIC that also address limitations of L2D systems including cost-sensitive scenarios, limited human predictions, and capacity constraints. Empirical evaluation in fraud detection shows both approaches outperform state-of-the-art baselines while improving calibration and supporting real-world adoption.